Nvidia and AI on the edge

By Yliess HATI

Oct 10, 2018

- Categories

- Data Science

- Tags

- Caffe

- GPU

- NVIDIA

- AI

- Deep Learning

- Edge computing

- Keras

- PyTorch

- TensorFlow [more][less]

Never miss our publications about Open Source, big data and distributed systems, low frequency of one email every two months.

In the last four years, corporations have been investing a lot in AI and particularly in Deep Learning and Edge Computing. While the theory has taken huge steps forward and new algorithms are invented each day, hardware has evolved as well.

Nvidia, known for its graphics hardware, has become the leader in hardware supporting machine learning software. And it is rare to hear about an AI project where Nvidia is not involved at all.

But how did such company obtain a leading position on the AI market? It is highly related to how machine learning treatments can be parallelized, to what GPUs are very good at and to how CPU are not suited to such tasks. GPUs are interesting for any sort of project needing calculus parallelization.

Therefore, the GPU technology is relevant for the challenges of Deep Learning. But the amount of calculus needed keeps growing. Today, Deep Learning models are trained on GPU farms. Cloud Computing has played a major role in the Machine Learning field, but there are still some issues we will detail later.

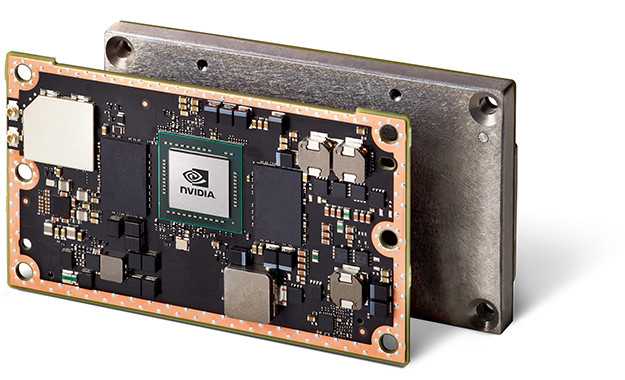

Jetson

The Jetson products from Nvidia are embedded computing devices designed for AI. The latest version is the Jetson TX2 and its technical sheet is impressive: 256 CUDA Cores, 8 GB LPDDR4 RAM, HMP Dual Denver 2/2 MB L2 + Quad ARM® A57/2 MB L2 en CPU, and 32 GB of storage. And all of this takes only the size of a credit card: 50 mm x 87 mm. These components are very interesting to run Machine Learning algorithms on. Its price: 470$ is competitive for these characteristics.

Edge Computing

As we have seen, the pipeline to the production phase is essentially Cloud-based. In fact, these algorithms are greedy and need an enormous amount of data to be trained. This is why the AI field is highly connected to the Big Data ecosystem. Moreover, these algorithms owe a big part of their performance to GPUs. But when we deploy AI services to the world, all clients do not have access to enough computing power to run these algorithms on their own device. Generally speaking, it is better to deploy AI models on GPU servers. This last point requires client data to leave the device which exposes it to possible security problems. Latency problems could also arise which is not acceptable for real-time or time-sensitive use cases.

The Jetson technology addresses many of these questions while allowing AI software and particularly Deep Learning ones. The Jetson module enables us to process the user data on the edge without a connection to a computing center. The data does not leave the device. The security is greatly improved and the client feels reassured. Also, the latency problem becomes nonexistent.

Even with such technology, Cloud Computing is still necessary to train the deep learning models. That gives us a new pipeline for the production of embedded ML algorithms:

- Build a model with a Deep Learning framework (Tensorflow, Keras, Pytorch, Caffe, … and others)

- Cloud training with GPU farms (Most cases)

- Model optimization thanks to the Nvidia’s optimization framework: TensorRT (Model size/weight reduction while trying to preserve accuracy)

- Deployment on the electronic device equipped with a Jetson module

- Embedded inferences

As you may have noticed, a new step has been added to the Pipeline: the model Optimization. It is, in fact, a necessity to reduce the model weight to be placed on the device and in order to produce faster inferences. For this task, Nvidia has a framework: TensorRT. The aim of this framework is to reduce the model’s weight while keeping its accuracy. The depth of a model and its size have a direct impact on its performances, this is what TensorRT aims to optimize.

Use cases

The Jetson Nvidia products are already in use in many projects and have proved their performances. Here are some examples:

- Skydio R1 (Autonomous camera):

The R1 quadrocopter from Skdio is a completely autonomous drone. Powered by the Jetson module and AI, this drone is capable of filming people, following them while dodging obstacles. It can fly at full speed in a forest allowing small teams and amateurs to film without hiring a professional pilot. - Unsupervised.ai Maryam (warehouse intelligent robots):

Maryam robots allow warehouses to take the way to a 4.0 industry. These robots are powered by the Jetson module and AI to avoid obstacles, map their environment and are ready to be used without adding sensors to the warehouses. They are more efficient than specialized robots from the previous generation and are ready to be used without any transition. These robots are also modular and can be adapted to different tasks: moving boxes, controlling their presence, and much more. - Pixevia (Data from cameras):

Pixevia also uses Jetson technology and AI to extract data from videos. They can detect parking spots and vehicles or objects parked and count the number of empty spots and find their location. But it can also identify the vehicles license plates. Pixevia is also active in the stores with security cameras which can count clients and box them on the screen in real time. Their solutions are deployed on intelligent cameras but also on drones equipped with Jetson.