Mesos Introduction

Nov 15, 2017

Never miss our publications about Open Source, big data and distributed systems, low frequency of one email every two months.

Apache Mesos is an open source cluster management project designed to implement and optimize distributed systems. Mesos enables the management and sharing of resources in a fine and dynamic way between different nodes and for various applications. This article covers Mesos architecture, its fundamentals, and its support for NVIDIA GPUs.

Mesos Architecture

Mesos consists of several elements:

- Master daemon: it runs on the master nodes and controls the slaves daemons.

- Slave daemon: it runs on the slave nodes and makes it possible to launch tasks.

- Framework: Better known as “Mesos”, it is composed of:

- A scheduler that asks the master for available resources

- One or more executors launching applications on Slave nodes.

- Offer: lists the available resources “CPU and memory”.

- Task: tasks run on slave s nodes, it can be any application (bash, SQL Query, Hadoop job, …).

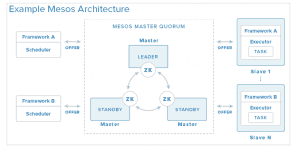

- Zookeeper: It allows to coordinate the masters nodes

High availability

To avoid a SPOF (Single Point of Failure), it is necessary to use multiple masters, a master leader and several masters of safeguard. Zookeeper replicates its data on N master nodes to form a Zookeeper quorum. His responsibility is to coordinate the election of the master leader. At least 3 masters are required for high availability.

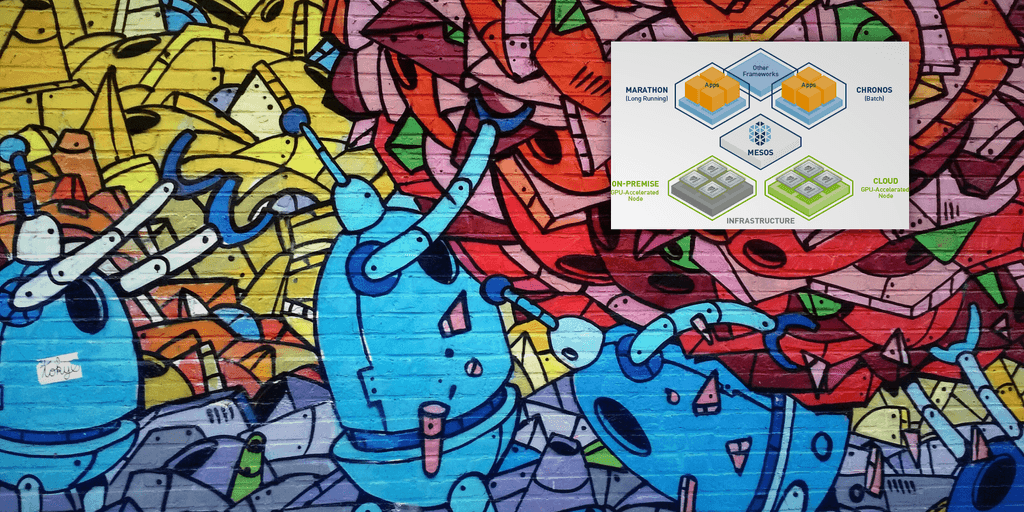

Marathon

Marathon is a container orchestrator for Mesos that allows you to launch applications. It is equipped with a REST API to start and stop applications.

Chronos

Chronos is a framework for Mesos developed by Airbnb to replace the standard crontab. It is a complete, distributed, fault-tolerant scheduler that facilitates the orchestration of tasks. Chronos has a REST API, which allows you to create planning tasks from a web interface.

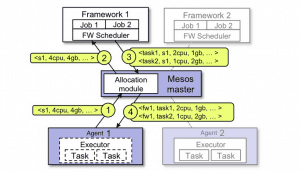

Operating logic

This diagram explains how a task is started and orchestrated:

- The agent 1 informs the master leader of the available resources of the slave node with which it is associated. The master can then edit a policy, it offers all the resources available to the framework 1.

- The master informs the framework 1 of the resources available to agent 1.

- The orchestrator responds to the master “I will perform two tasks on agent 1” depending on available resources.

- The master sends both tasks to the agent who will allocate the resources to the two new tasks.

Containerizer

Containerizer component that allows you to launch containers, it is responsible for the isolation and management of container resources.

Creating and launching a containerizer:

- The agent creates a containerizer with the option

--containerizer - To execute a containerizer, it is necessary to specify the executor’s type (mesos, docker, composing) otherwise it will use the default one. It is possible to know the default executor thanks to the

TaskInfocommandmesos-executor-> default executormesos-docker-executor-> docker executor

Container types:

Mesos supports different types of containers:

- Composing: docker-compound layout

- Docker containerizer: manages containers using the docker engine engine.

- Mesos containerizer are the native containers of Mesos

NVIDIA GPUs and Mesos

Using GPUs with Mesos is easy. It is necessary to configure the agents beforehand so that they take into account the GPUs when they inform the master of available resources. It is obviously necessary to configure the masters so that they too can inform the frameworks of available resources.

Launching tasks is executed in the same way by adding a GPU resource type. However, unlike processors, memory and disks, only integer numbers of GPUs can be selected. If a fractional quantity is chosen, launching the task will result in an error of type TASK_ERROR.

For now, Mesos containerizers are able to launch tasks with NVIDIA GPUs. Normally this does not cause limitations because Mesos containerizer natively supports docker images.

In addition, Mesos integrates the operating logic of the ”nvidia-docker” image exposing the CUDA Toolkit to developers and data scientists. This makes it possible to directly mount the drivers and tools necessary for the GPU in the container. You can build your container locally and deploy it easily with Mesos.

Conclusion

Mesos is a solution that allows companies to deploy and manage Docker containers, while sharing the available resources of their infrastructures. In addition, thanks to the Mesos containerizer, we can perform deep learning in a distributed way or share GPU resources between multiple users.