Nobody* puts Java in a Container

Oct 28, 2017

Never miss our publications about Open Source, big data and distributed systems, low frequency of one email every two months.

This talk was about the issues of putting Java in a container and how, in its latest version, the JDK is now more aware of the container it is running in. The presentation is led by Joerg Schad, Distributed Software Engineer from Mesosphere, at the OpenSource Summit 2017 in Prague.

What are the issues of putting Java in a container?

How does the JVM interacts with the isolation provided by the container?

Containers

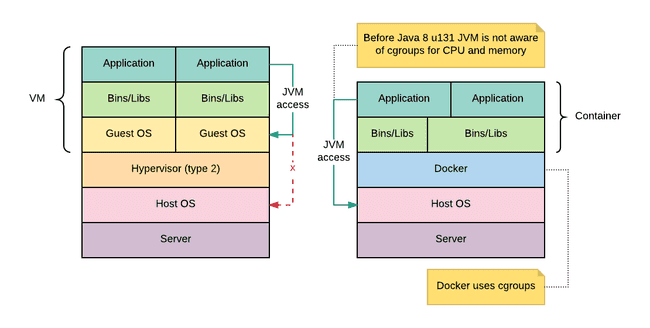

A container is a convenient way to ship applications easily. By relying directly on the kernel, the isolation provided by a container is weaker than a Virtual Machine in exchange of greater performances.

A container is therefore very fast to spin up and uses less memory and cpu than a VM.

There are two complementary technologies used to isolate a container from the system: CGroups and Namespaces. Combined, they offer a lightweight while powerful solution for isolating processes from the rest of the system.

Namespaces

A Namespace provides to a process their own view of the system. For example, a process only knows about its PIDs, mount points and filesystems.

CGroups

While namespaces controls the view of the system for one process, CGroup limits the resources used by one process (or a group of processes). Such examples include:

- CPU usage

- Memory limits

- Network and disk IO

- Devices

Java

The current troubles is on how the JVM interacts with CGroups to gather system resources.

Memory

A Java program does not only use memory for the heap size, but also for the Garbage Collector threads, the just in time compiler, …

Before Java 8u131, to get the quantity of available memory on the host, the JVM determined the heap size by looking into into /proc. However, in a container environment, this value reflect the memory of the system and not of the memory available to the container, in contradiction with the value allocated to the container by its CGroups definition.

The consequence of such behaviour is simple yet problematic. The maximum heap size is by default be 1/4 of the physical memory. Since this memory is based on the physical memory of the host, a Java process running inside container may well allocate more than the memory available for the container. Thus, it will receive a kill signal as the consequence of exceeding the limitations enforced by the CGroup.

Two solutions exists:

- use JAVA Options

-Xmxto manually set the maximum heap size which reduces the portability offered by containers - use JDK8u131 or JDK9 and

-XX:+UseCGroupMemoryLimitForHeap -XX:+UnlockExperimentalVMOptionsflags in order to make the JVM size aware of the CGroup

CPU

The problem is very similar in terms of CPU Usage. There are two ways of limiting the CPU usage of a process using CGroups:

- CPU sets: assign a number of CPU to a process

- CPU shares: assign a weight which acts like percentage limits on the usage of the CPU.

The JVM is not aware that it is running inside a container and will base it’s default settings on the number of CPU available on the host instead of the ones enforced by the CGroup. Based on this value the JVM will start too much GC and JIT threads leading to performance issues.

This issue is solved in the newest JDK as well. Since the JVM is now aware of the number of CPUs available through CGroups. it is therefore not recommended to use CPU Shares.

Conclusion

In contrast with VM technology, containers don’t hide the underlying hardware from the process. The issue with containers and CGroups is mostly solved after JDK8u131.

References

- Java and containers - Red Hat Blog

- Java is a first class citizen in a Docker ecosystem now - Cloakable

- Java SE supports for Docker CPU and memory limits - Oracle Blog

- [*Nobody puts baby in the corner](https://www.urbandictionary.com/define.php?term=nobody puts Baby in the corner)