LXD: The Missing Piece

Dec 28, 2018

- Categories

- Containers Orchestration

- Tags

- CPU

- Linux

- LXD

- VM

- Docker

- Kubernetes

Never miss our publications about Open Source, big data and distributed systems, low frequency of one email every two months.

LXD stands for Linux Container Daemon. Yet another container technology. But LXD is very different. It stands apart from the pack. It is not necessarily better nor much faster nor more secure! But it resolves an issue that other containers doesn’t. Many of us moved too fast from traditional Virtual Machines to Application containers because of the hype around Docker, Kubernetes and the others. But there is an older type of containers, that tried to cover a large area of use cases that we still have today and will stick with us for a while.

Virtualization in few words

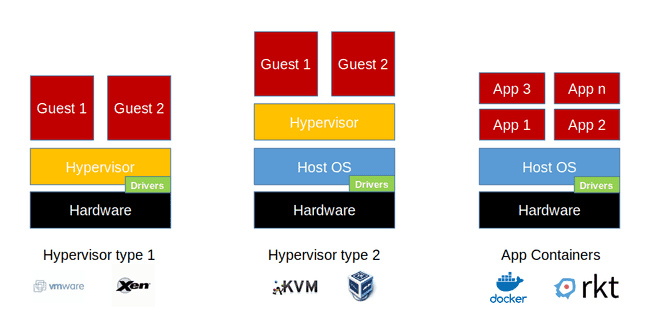

In the Figure below we can see clearly the difference between the hypervisors (1,2) and the containers. Most of us knows what is the difference between a Virtual Machine and a Container. For those who doesn’t, don’t worry, I have got you covered.

The Virtualization is a technology that lies to a guest OS. The hypervisor emulates a hardware layer. So the guest can see it and think it is running on a real Hardware. It send system calls to that layer using the drivers of the emulated hardware. The hypervisor intercepts those calls and translates/redirects them to the host’s physical hardware using the drivers of the physical hardware. Who controls those drivers indicates the type of the hypervisor. If the hypervisor is in control, it is a type 1 hypervisor. If the host OS manages the drivers for the hypervisor, then it is a hosted hypervisor or a type 2 hypervisor. Type 1 hypervisors is the most powerful, it is commonly used for production environments. While type 2 Hypervisor is much simpler to install and to use, this type is used for development and testing environments.

Virtualization weakness comes from its most Powerful Feature

Virtualization is very fascinating. Hypervisors can run multiple OS types and emulate multiple hardware architectures (CPU, Disks, Network etc.). So it is possible to run a wide range of OS choices. Those systems can run on an emulated hardware different from the physical hardware. There is no silver bullet nor a solution with no drawbacks. The emulation and passing through system calls between the emulated and physical hardware come with the cost of performance overhead. In addition to the need of running a full guest OS. Which is slow, heavy on disk and not optimized due to duplicated kernels and drivers on every single Virtual Machine. Along the line many technologies were introduced to reduce the overhead such as para-virtualization, and CPU built-in Virtualization Hardware acceleration capabilities. While hypervisors tried to reduce the emulation overhead, containers removed it.

This is the era of Linux

Linux and Open Source for a long time were the underdogs, but not anymore. The majority of applications runs on Linux. Containers become popular because of the rising number of applications that run on Linux - or the other way around. Containers also brought a lot of users to Linux. Since the standard Application OS is Linux, there is no need to emulate hardware nor run an entire guest OS.

”with the wind in my hair, and the earth beneath my feet, I am one with nature, my spirit is free“[1], said the container on a Linux hosts.

A Container is a bunch of isolated processes that run on Kernel beside other processes. Containers processes don’t want/need to know about host processes. So the process inside a Container can not see what happens outside. The host manages container processes as normal processes.

The Container relies on the idea that we can share the host Kernel across all Containers. Old Linux technologies such as cgroups, namespaces, rootfs isolate those guests/containers and create dedicated namespaces (Network, Mount, PID, User …) for them. So there is no need for emulation and all its added overhead. Since all Containers talk directly to the Kernel, which executes all privileged actions. But is there a catch? Only a supported guest OS can run on that Kernel. Hence only Linux can run as a container. You may think this is a disadvantage of Linux containers! This would limit the choice of OS used to deploy applications to Linux. Linux is a very powerful, secure and generic Operating System. So according to reality it is the “others’” disadvantage not being Linux.

LXD solves what docker doesn’t

The application container provides only the very necessary environment to run a process. So, the container remains very light, very fast, easy to maintain and upgrade. That’s what a Docker container provides. On the other hand machine container are containers made to run a complete “VMlike” environment. LXD (LXC + LXD) provides the virtual machine experience without the virtual machines!

LXC provides an environment to run a complete Operating Systems, with all necessary services. Simply put, you can do on a LXC container what you do on a virtual machine. LXD provides Hypervisor’s management capabilities. It can do efficiently and easily, resources allocations adjustment while the Container is running, snapshoting, restoring, migration from a host to another and copying …

LXD tries to replace VMs and hypervisors for a wide range of use cases. Docker is very powerful, but adopting Docker is very challenging for developers and especially for operations, because it implicates changing the way we operate the platform. There are many applications that cost a fortune to be developed and there is no intention to re structure them to suite Docker environments. To sum up LXD provides all the advantages of using containers. But without the need to change the way we do operations as it’s transparent for application and guest OS operators.

What is next?

Next is an article for quick tour of LXD, we will setup an environment configure LXD and spin up some containers and manage them.

[1] Quote by Christy Ann Martine