MLOps

MLOps is an extention of DevOps (development and operations) practices of putting in production machine learning (ML) models. It is focused on automation and monitoring at all the steps of ML system construction: creating reproducible pipelines, reusable software environment, testing, integration, deployment and model performance monitoring.

There are many additional components in MLOps in comparison to DevOps, due to different nature of Data Science and Software development projects. In Data Science:

- many different programming languages and frameworks are used, thus the projects don't have monolithic structure.

- there is an experimentation step during development of models, where the performance of the models and used datasets need to be tracked.

- testing needs to include the model, data and the software components.

- pipelines can be long and complex and deploying them can require automating many steps that were done manually during the construction of the system.

- once in production, the performance of the model needs to be constantly monitored, since change in incoming data can change decrease the performance. In this case, the model should be re-trained.

MLOps is a practice for collaboration and communication between data scientists and operations professionals to help mannage production ML lifecycle. Similar to the DevOps and DataOps appoaches, MLOps looks to increase automation and improve the quality of production ML while also focusiong on business and regulatory requirements.

- Learn more

- Wikipedia

Related articles

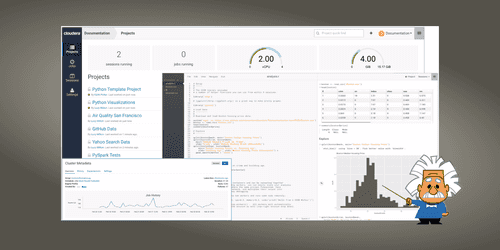

Introduction to Cloudera Data Science Workbench

Categories: Data Science | Tags: Azure, Cloudera, Docker, Git, Kubernetes, Machine Learning, MLOps, Notebook

Cloudera Data Science Workbench is a platform that allows Data Scientists to create, manage, run and schedule data science workflows from their browser. Thus it enables them to focus on their main…

Feb 28, 2019

Machine Learning model deployment

Categories: Big Data, Data Engineering, Data Science, DevOps & SRE | Tags: DevOps, Operation, AI, Cloud, Machine Learning, MLOps, On-premises, Schema

“Enterprise Machine Learning requires looking at the big picture […] from a data engineering and a data platform perspective,” lectured Justin Norman during the talk on the deployment of Machine…

Sep 30, 2019

MLflow tutorial: an open source Machine Learning (ML) platform

Categories: Data Engineering, Data Science, Learning | Tags: AWS, Azure, Databricks, Deep Learning, Deployment, Machine Learning, MLflow, MLOps, Python, Scikit-learn

Introduction and principles of MLflow With increasingly cheaper computing power and storage and at the same time increasing data collection in all walks of life, many companies integrated Data Science…

Mar 23, 2020

Faster model development with H2O AutoML and Flow

Categories: Data Science, Learning | Tags: Automation, Cloud, H2O, Machine Learning, MLOps, On-premises, Open source, Python

Building Machine Learning (ML) models is a time-consuming process. It requires expertise in statistics, ML algorithms, and programming. On top of that, it also requires the ability to translate a…

Dec 10, 2020

TensorFlow Extended (TFX): the components and their functionalities

Categories: Big Data, Data Engineering, Data Science, Learning | Tags: Beam, Data Engineering, Pipeline, CI/CD, Data Science, Deep Learning, Deployment, Machine Learning, MLOps, Open source, Python, TensorFlow

Putting Machine Learning (ML) and Deep Learning (DL) models in production certainly is a difficult task. It has been recognized as more failure-prone and time consuming than the modeling itself, yet…

Mar 5, 2021

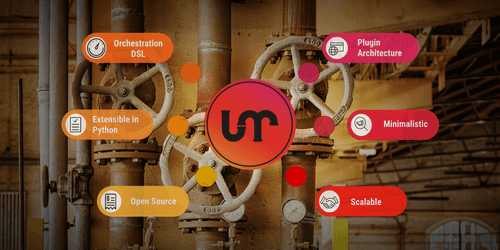

Apache Liminal: when MLOps meets GitOps

Categories: Big Data, Containers Orchestration, Data Engineering, Data Science, Tech Radar | Tags: Data Engineering, CI/CD, Data Science, Deep Learning, Deployment, Docker, GitOps, Kubernetes, Machine Learning, MLOps, Open source, Python, TensorFlow

Apache Liminal is an open-source software which proposes a solution to deploy end-to-end Machine Learning pipelines. Indeed it permits to centralize all the steps needed to construct Machine Learning…

Mar 31, 2021

H2O in practice: a Data Scientist feedback

Categories: Data Science, Learning | Tags: Automation, Cloud, H2O, Machine Learning, MLOps, On-premises, Open source, Python

Automated machine learning (AutoML) platforms are gaining popularity and becoming a new important tool in the data scientists’ toolbox. A few months ago, I introduced H2O, an open-source platform for…

Sep 29, 2021

H2O in practice: a protocol combining AutoML with traditional modeling approaches

Categories: Data Science, Learning | Tags: Automation, Cloud, H2O, Machine Learning, MLOps, On-premises, Open source, Python, XGBoost

H20 comes with a lot of functionalities. The second part of the series H2O in practice proposes a protocol to combine AutoML modeling with traditional modeling and optimization approach. The objective…

Nov 12, 2021

GitOps in practice, deploy Kubernetes applications with ArgoCD

Categories: Containers Orchestration, DevOps & SRE, Adaltas Summit 2021 | Tags: Argo CD, CI/CD, Git, GitOps, IaC, Kubernetes

GitOps is a set of practices to deploy applications using Git. Application definitions, configurations, and connectivity are to be stored in a version control software such as Git. Git then serves as…

Dec 16, 2021