Cloudera partner in Paris, France

Deploy real-time Big Data analytics on public-private clouds with a privacy-first architecture.

Adaltas is a long time partner of Cloudera with a large track record of solutions going to production.

We integrates the Cloudera distributions with our customer IT infrastructure respecting industry best-pratices including automated deployments, end-to-end security, and high-availability. Our data engineers and data scientists are training to use and leverage the various components of the Cloudera big data platforms.

Discover Cloudera with Adaltas

With the objective to promote Cloudera in your company, we offer 2 days of consulting to our new customers.

Contact us for a detailed presentation of the Cloudera platform and its potential impact in the context of your projects.

Combine public and private clouds

Cloudera is leading the Hybrid Data Cloud battle in the IT department and building new, easy-to-use cloud solutions.

Public Cloud

- Support for the major Cloud provider, AWS, Azure, and GCP.

- Cloud infrastructure agnostic and easily portable applications.

- One platform to serve all data lifecycle use cases with a unified security and governance model

Unified Analytics practice

- For data science, data engineering and analytical use cases

- Accessible to technical and business users

- Unified platform that eliminate silos and speed the discovery of data-driven insights

Innovation and data-driven culture.

- A shared data experience that applies consistent security, governance, and metadata

- True hybrid capability with support for public cloud, multi-cloud, and on-premises deployments

Methodology and roadmap for success

Adaltas works with your team to leverage the Cloudera platform with a comprehensive Methodology. Our experts are certified with Cloudera as well as with the major Cloud providers including Microsoft Azure, Amazon AWS and Google GCP.

Qualify the use case

- What is the business challenge today.

- What is the business outcome and value you are hoping to achieve.

Qualify the data

- Is the data in the cloud?

- Describe the data: type, size, format, speed, ...

- Understand the complexity of the Big Data the client is working with.

Qualify the solution

- Describe the current technology ecosystem and data pipeline architecture.

- Who are the data users? (data scientits, data engineers, business users)

State-of-the-art platform for analytics and AI in the cloud

The extensive Spark ML libraries and integration with popular frameworks such as Tensorflow, PyTorch, etc. make Cloudera the market leader among AI platforms. Additionally, the introduction of MLFlow has made managing the machine learning lifecycle easy and productive.

For Data Analysts

- Apache Hive to transform and analyse complex, multi-structured data scalable in Cloudera environments.

- Apache Impala for real-time interactive analysis of the data stored in Hadoop using a native SQL environment.

For data engineers

- Load, transform, cleanse, join, explore, and analyze data.

- Create, execute, manage, operate and optimize dataflows on a comprehensive plateform.

For data scientists

- Enterprise data science and machine learning using Apache Spark and your favorite libraries.

- Leverage the Cloudera Data Science Workbench (CDSW) to deliver end-to-end data science and machine learning workflows.

Articles related to Cloudera

Storage and massive processing with Hadoop

Categories: Big Data | Tags: Hadoop, HDFS, Storage

Apache Hadoop is a system for building shared storage and processing infrastructures for large volumes of data (multiple terabytes or petabytes). Hadoop clusters are used by a wide range of projects…

By David WORMS

Nov 26, 2010

Virtual machines with static IP for your Hadoop development cluster

Categories: Infrastructure | Tags: Ambari, Hortonworks, Red Hat, VirtualBox, VM, VMware, Cloudera, Network

While I am about to install and test Ambari, this article is the occasion to illustrate how I set up my development environment with multiple virtual machines. Ambari, the deployment and monitoring…

By David WORMS

Feb 27, 2013

The state of Hadoop distributions

Categories: Big Data | Tags: Hortonworks, Intel, Oracle, Hadoop, Cloudera

Apache Hadoop is of course made available for download on its official webpage. However, downloading and installing the several components that make a Hadoop cluster is not an easy task and is a…

By David WORMS

May 11, 2013

Hadoop development cluster of virtual machines with static IP using VirtualBox

Categories: Infrastructure | Tags: Ambari, Hortonworks, Red Hat, VirtualBox, VM, VMware, Cloudera, Network

A few days ago, I explained how to set up a cluster of virtual machine with static IPsand Internet access suitable to host your Hadoop cluster locally for development. At the time I made use of VMWare…

By David WORMS

Mar 14, 2013

MiNiFi: Data at Scales & the Values of Starting Small

Categories: Big Data, DevOps & SRE, Infrastructure | Tags: MiNiFi, C++, HDF, NiFi, Cloudera, HDP, IOT

This conference presented rapidly Apache NiFi and explained where MiNiFi came from: basically it’s a NiFi minimal agent to deploy on small devices to bring data to a cluster’s NiFi pipeline (ex: IoT…

Jul 8, 2017

Exposing Kafka on two different networks

Categories: Infrastructure | Tags: Cyber Security, VLAN, Kafka, Cloudera, CDH, Network

A Big Data setup usually requires you to have multiple networking interface, let’s see how to set up Kafka on more than one of them. Kafka is a open-source stream processing software platform system…

Jul 22, 2017

Cloudera Sessions Paris 2017

Categories: Big Data, Events | Tags: Altus, CDSW, SDX, EC2, Azure, Cloudera, CDH, Data Science, PaaS

Adaltas was at the Cloudera Sessions on October 5, where Cloudera showcased their new products and offerings. Below you’ll find a summary of what we witnessed. Note: the information were aggregated in…

Oct 16, 2017

Apache Hadoop YARN 3.0 – State of the union

Categories: Big Data, DataWorks Summit 2018 | Tags: GPU, Hortonworks, Hadoop, HDFS, MapReduce, YARN, Cloudera, Data Science, Docker, Release and features

This article covers the ”Apache Hadoop YARN: state of the union” talk held by Wangda Tan from Hortonworks during the Dataworks Summit 2018. What is Apache YARN? As a reminder, YARN is one of the two…

May 31, 2018

Composants for CDH and HDP

Categories: Big Data | Tags: Flume, Hortonworks, Hadoop, Hive, Oozie, Sqoop, Zookeeper, Cloudera, CDH, HDP

I was interested to compare the different components distributed by Cloudera and HortonWorks. This also gives us an idea of the versions packaged by the two distributions. At the time of this writting…

By David WORMS

Sep 22, 2013

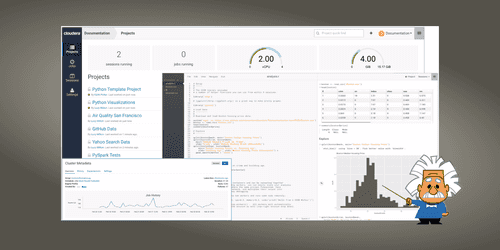

Introduction to Cloudera Data Science Workbench

Categories: Data Science | Tags: Azure, Cloudera, Docker, Git, Kubernetes, Machine Learning, MLOps, Notebook

Cloudera Data Science Workbench is a platform that allows Data Scientists to create, manage, run and schedule data science workflows from their browser. Thus it enables them to focus on their main…

Feb 28, 2019

Running Apache Hive 3, new features and tips and tricks

Categories: Big Data, Business Intelligence, DataWorks Summit 2019 | Tags: JDBC, LLAP, Druid, Hadoop, Hive, Kafka, Release and features

Apache Hive 3 brings a bunch of new and nice features to the data warehouse. Unfortunately, like many major FOSS releases, it comes with a few bugs and not much documentation. It is available since…

Jul 25, 2019

Notes on the Cloudera Open Source licensing model

Categories: Big Data | Tags: CDSW, License, Cloudera Manager, Open source

Following the publication of its Open Source licensing strategy on July 10, 2019 in an article called “our Commitment to Open Source Software”, Cloudera broadcasted a webinar yesterday October 2…

By David WORMS

Oct 25, 2019

Cloudera CDP and Cloud migration of your Data Warehouse

Categories: Big Data, Cloud Computing | Tags: Azure, Cloudera, Data Hub, Data Lake, Data Warehouse

While one of our customer is anticipating a move to the Cloud and with the recent announcement of Cloudera CDP availability mi-september during the Strata conference, it seems like the appropriate…

By David WORMS

Dec 16, 2019

Using Cloudera Deploy to install Cloudera Data Platform (CDP) Private Cloud

Categories: Big Data, Cloud Computing | Tags: Ansible, Cloudera, CDP, Cluster, Data Warehouse, Vagrant, IaC

Following our recent Cloudera Data Platform (CDP) overview, we cover how to deploy CDP private Cloud on you local infrastructure. It is entirely automated with the Ansible cookbooks published by…

Jul 23, 2021

An overview of Cloudera Data Platform (CDP)

Categories: Big Data, Cloud Computing, Data Engineering | Tags: SDX, Big Data, Cloud, Cloudera, CDP, CDH, Data Analytics, Data Hub, Data Lake, Data lakehouse, Data Warehouse

Cloudera Data Platform (CDP) is a cloud computing platform for businesses. It provides integrated and multifunctional self-service tools in order to analyze and centralize data. It brings security and…

Jul 19, 2021

Introducing Trunk Data Platform: the Open-Source Big Data Distribution Curated by TOSIT

Categories: Big Data, DevOps & SRE, Infrastructure | Tags: DevOps, Hortonworks, Ansible, Hadoop, HBase, Knox, Ranger, Spark, Cloudera, CDP, CDH, Open source, TDP

Ever since Cloudera and Hortonworks merged, the choice of commercial Hadoop distributions for on-prem workloads essentially boils down to CDP Private Cloud. CDP can be seen as the “best of both worlds…

Apr 14, 2022

Keycloak deployment in EC2

Categories: Cloud Computing, Data Engineering, Infrastructure | Tags: Security, EC2, Authentication, AWS, Docker, Keycloak, SSL/TLS, SSO

Why use Keycloak Keycloak is an open-source identity provider (IdP) using single sign-on (SSO). An IdP is a tool to create, maintain, and manage identity information for principals and to provide…

By Stephan BAUM

Mar 14, 2023

Data platform requirements and expectations

Categories: Big Data, Infrastructure | Tags: Data Engineering, Data Governance, Data Analytics, Data Hub, Data Lake, Data lakehouse, Data Science

A big data platform is a complex and sophisticated system that enables organizations to store, process, and analyze large volumes of data from a variety of sources. It is composed of several…

By David WORMS

Mar 23, 2023

CDP part 1: introduction to end-to-end data lakehouse architecture with CDP

Categories: Cloud Computing, Data Engineering, Infrastructure | Tags: Data Engineering, Hortonworks, Iceberg, AWS, Azure, Big Data, Cloud, Cloudera, CDP, Cloudera Manager, Data Warehouse

Cloudera Data Platform (CDP) is a hybrid data platform for big data transformation, machine learning and data analytics. In this series we describe how to build and use an end-to-end big data…

By Stephan BAUM

Jun 8, 2023

CDP part 2: CDP Public Cloud deployment on AWS

Categories: Big Data, Cloud Computing, Infrastructure | Tags: Infrastructure, AWS, Big Data, Cloud, Cloudera, CDP, Cloudera Manager

The Cloudera Data Platform (CDP) Public Cloud provides the foundation upon which full featured data lakes are created. In a previous article, we introduced the CDP platform. This article is the second…

Jun 19, 2023

CDP part 3: Data Services activation on CDP Public Cloud environment

Categories: Big Data, Cloud Computing, Infrastructure | Tags: Infrastructure, AWS, Big Data, Cloudera, CDP

One of the big selling points of Cloudera Data Platform (CDP) is their mature managed service offering. These are easy to deploy on-premises, in the public cloud or as part of a hybrid solution. The…

Jun 27, 2023

CDP part 5: user permissions management on CDP Public Cloud

Categories: Big Data, Cloud Computing, Data Governance | Tags: Ranger, Cloudera, CDP, Data Warehouse

When you create a user or a group in CDP, it requires permissions to access resources and use the Data Services. This article is the fifth in a series of six: CDP part 1: introduction to end-to-end…

Jul 18, 2023

CDP part 6: end-to-end data lakehouse ingestion pipeline with CDP

Categories: Big Data, Data Engineering, Learning | Tags: Business intelligence, Data Engineering, Iceberg, NiFi, Spark, Big Data, Cloudera, CDP, Data Analytics, Data Lake, Data Warehouse

In this hands-on lab session we demonstrate how to build an end-to-end big data solution with Cloudera Data Platform (CDP) Public Cloud, using the infrastructure we have deployed and configured over…

Jul 24, 2023